Using GPT-4 to Make a Website that Grows

GPT-4 is an extremely powerful tool, and it can be used to create websites that grow as you explore them.

GPT-4 is amazing. Seriously, amazing. It isn't without faults—of course—but compared to what we previously had access to, it's blown me away.

Around two weeks ago, OpenAI (the same people behind ChatGPT and GPT-3) released a new language model called GPT-4. These language models can do "language-related" tasks—summarize text, translate messages, and in our case, automatically write programming articles.

That's what this article is about. These language models are great at cost saving—making well-written articles at a fraction of the cost and time—but I think there's something that makes these models even more important. They can do things that simply weren't possible before—and not just for a lack of economic resources.

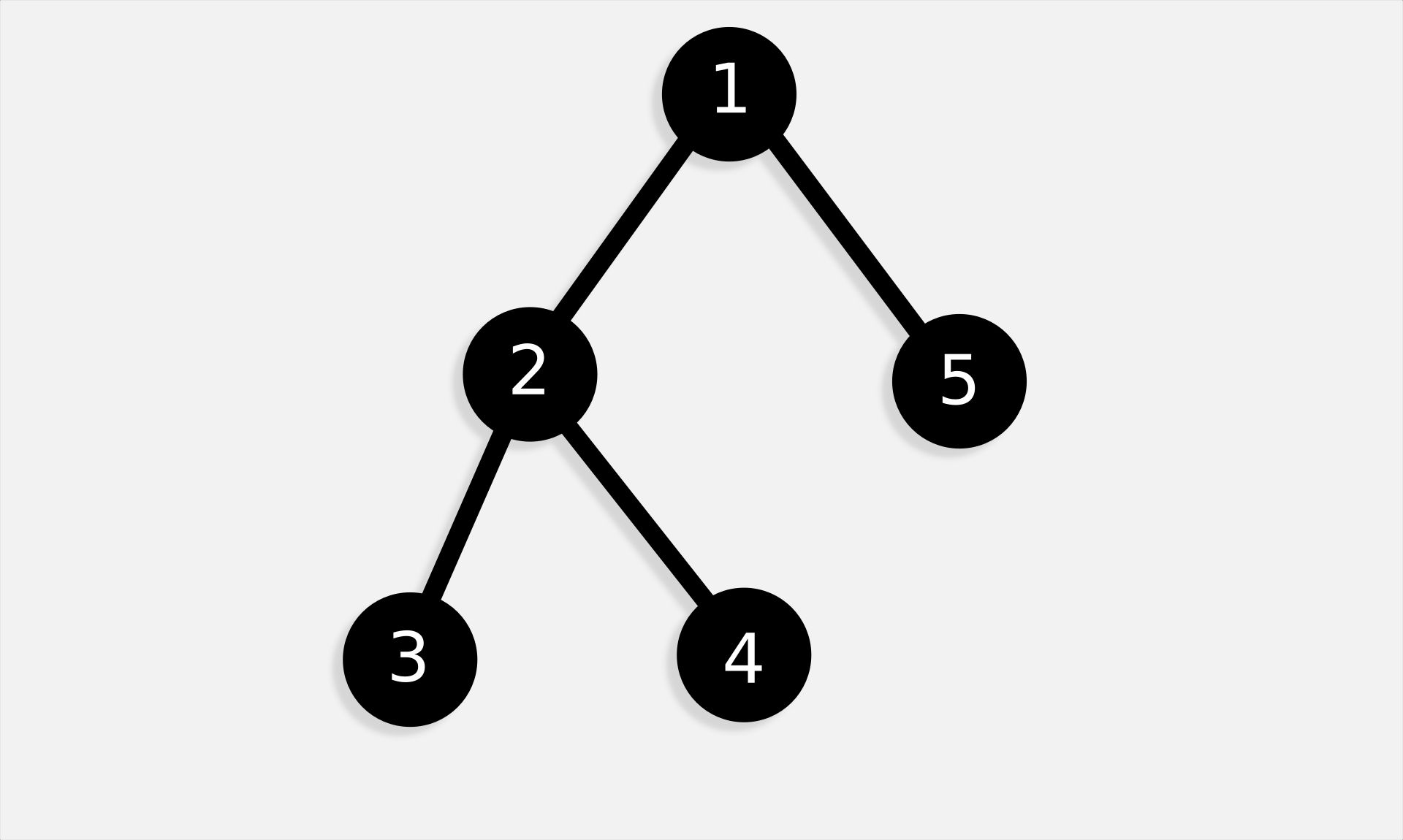

Think about how these models can write articles from scratch. What happens if you tell it to link to other articles? Well, what if those other articles don't exist? AI can make them. And then those articles will link to others, that link to others, and those others link to even more articles. What do you get at the end of all this? A website that grows as you explore it.

Try it Out

Before we dive into the nitty-gritty of how this works (and we will, I promise), why don't we look at an actual article? Here's a couple—take your pick:

For safety reasons, you're only allowed to generate new articles if you're signed in. That means that you'll eventually hit a dead end if you don't have an account. I'll get into why that is later (for right now, just know that generating a ton of articles is expensive, so it's better to put some limitation on it), but for right now, keep in mind that it only grows if you have an account.

The Nitty-Gritty

If all's gone well, I've done a decent job at explaining the basic idea. But, just in case, this works as follows:

-

Click a link to a programming article.

-

If it doesn't exist, generate it.

-

The article will have links to other articles (that may or may not exist). For each of them, go back to step 1.

Essentially, we have an article that's been generated by AI. That AI is instructed to link to other articles (whether or not they exist). Then, when someone clicks one of these links, that article is generated (or displayed, if it already exists), restarting this cycle.

Photo Credit: Nikin.

The First Article

You may have noticed a pretty glaring issue with this scheme of creating articles. It works well enough when exploring concepts, but what if you want a specific article? It's possible to search for one, but what if it doesn't exist? Maybe it doesn't exist yet, or there's simply no way for the current articles to generate the one you're looking for.

This is actually a pretty big problem. It means, despite being automatically generated, this knowledge-base can never be fully "complete"—it won't be able to help users with every problem. This is by no means a new issue though. Any wiki/knowledge-base has the same issue of incompleteness.

This is a big problem, but it's by no means insurmountable. One of the reasons this feels like a problem I believe comes down to how we conceptualize search engines. Search engines serve to index content on the web, directing people to what it thinks is the most relevant to them. If something doesn't exist, the search results won't direct a user to it. That's a pretty simple (obvious, really) idea, but I think it's worth considering.

Equally worth considering is AI-based search. Using AI like this is obviously incorrect. It tailors its results specifically to the user, and can give them content that's more relevant than search engines. But AIs don't search. They invent. They make up. They hallucinate.

This article doesn't exist at the time of writing, but might be made in the future.

AI doesn't work for search. Not at all. But that doesn't mean it isn't relevant either. It's very, very good at making up articles that don't exist. And what's the driving force behind the AI-generated knowledge-base? AIs making up articles that don't exist.

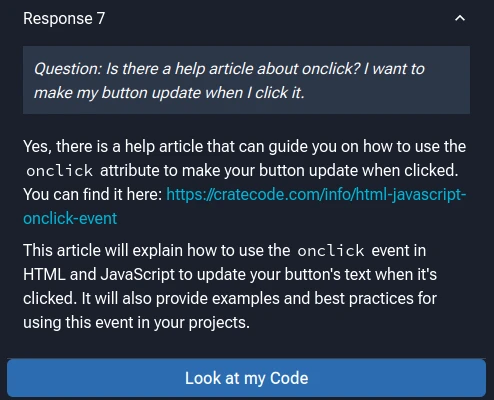

AI Assistant

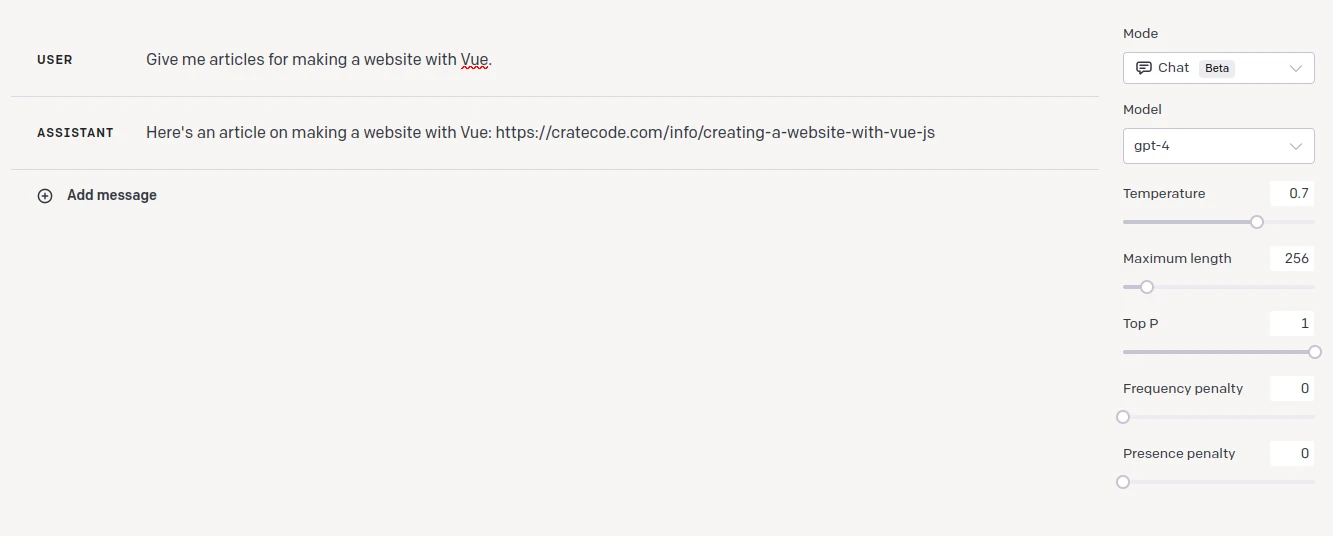

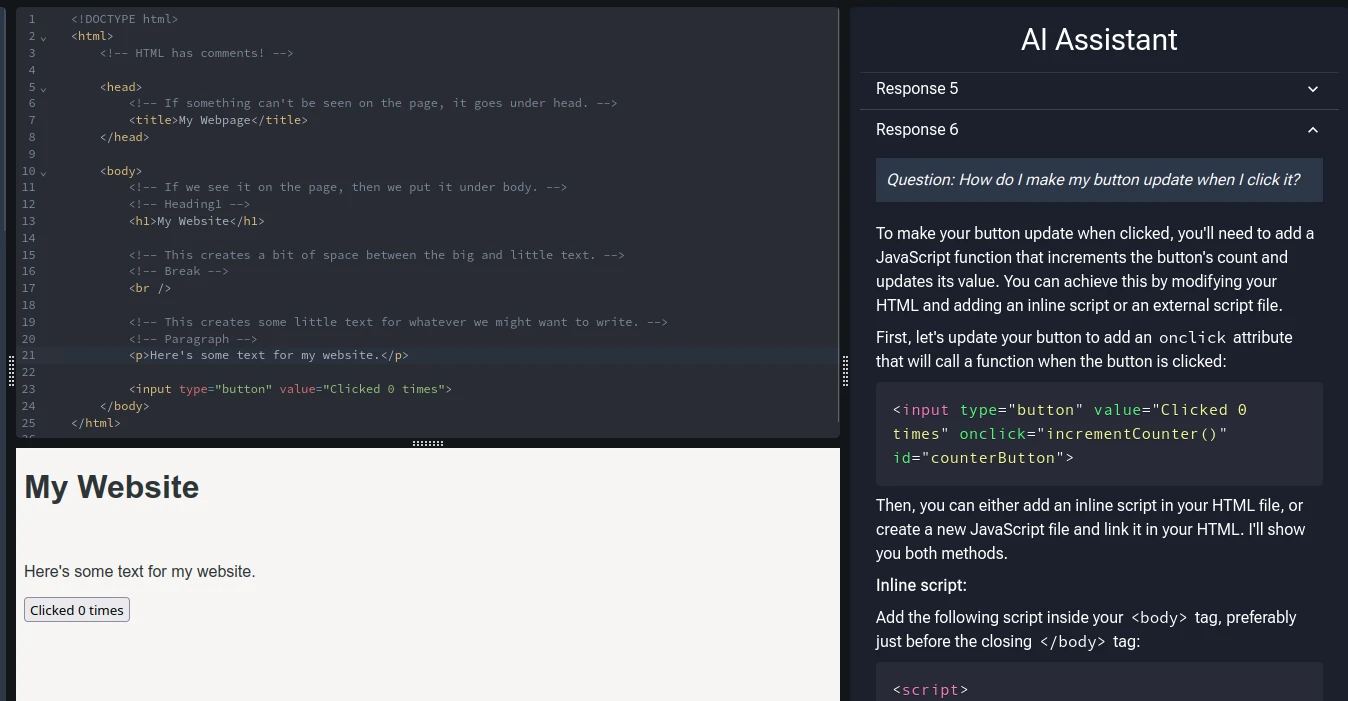

AI-generated articles (at least in Cratecode) weren't intended to create articles that people click on after searching for something. Okay, maybe a little bit—but the real intention behind them was to act as something that backs up our AI assistant.

Cratecode's AI assistant (which you can demo for free here) is a tool that acts almost as an "automated tutor". Its job is to help somewhat bridge the gap between students and real, human instructors, that's so prevalent in online learning. It can look at a lesson, analyze a student's code, and give them some hints for how to move forward. But one thing we realized was that it would be very helpful to have a "Further Reading" section of the response. If a user asks a question, and there issue is related to a certain thing, it might be helpful to link to an article explaining that thing. And, as mentioned, AIs are incredibly bad at searching for things—but they're spectacular at making things up. So, we used that to our advantage, having the AI make up an article URL and using another AI to generate the article itself.

That's how the first article is created. When someone asks for help about a topic, an article will be created for it automatically.

But Why?

Well, why not? A big part of the reason behind making this was to experiment with new things that were never possible before. We can write code to automatically analyze people's code, give them hints on how to proceed, and write articles for them, and I think that's astonishing—and worth a bit of experimentation, at least. I'd be lying if I said this isn't useful for SEO either.

Really though, the point is to provide articles for areas where students are struggling. If one student generates an article, that article will probably be relevant to others. It becomes available to be linked to and searched. Doing things this way means that the articles we host are useful to someone.

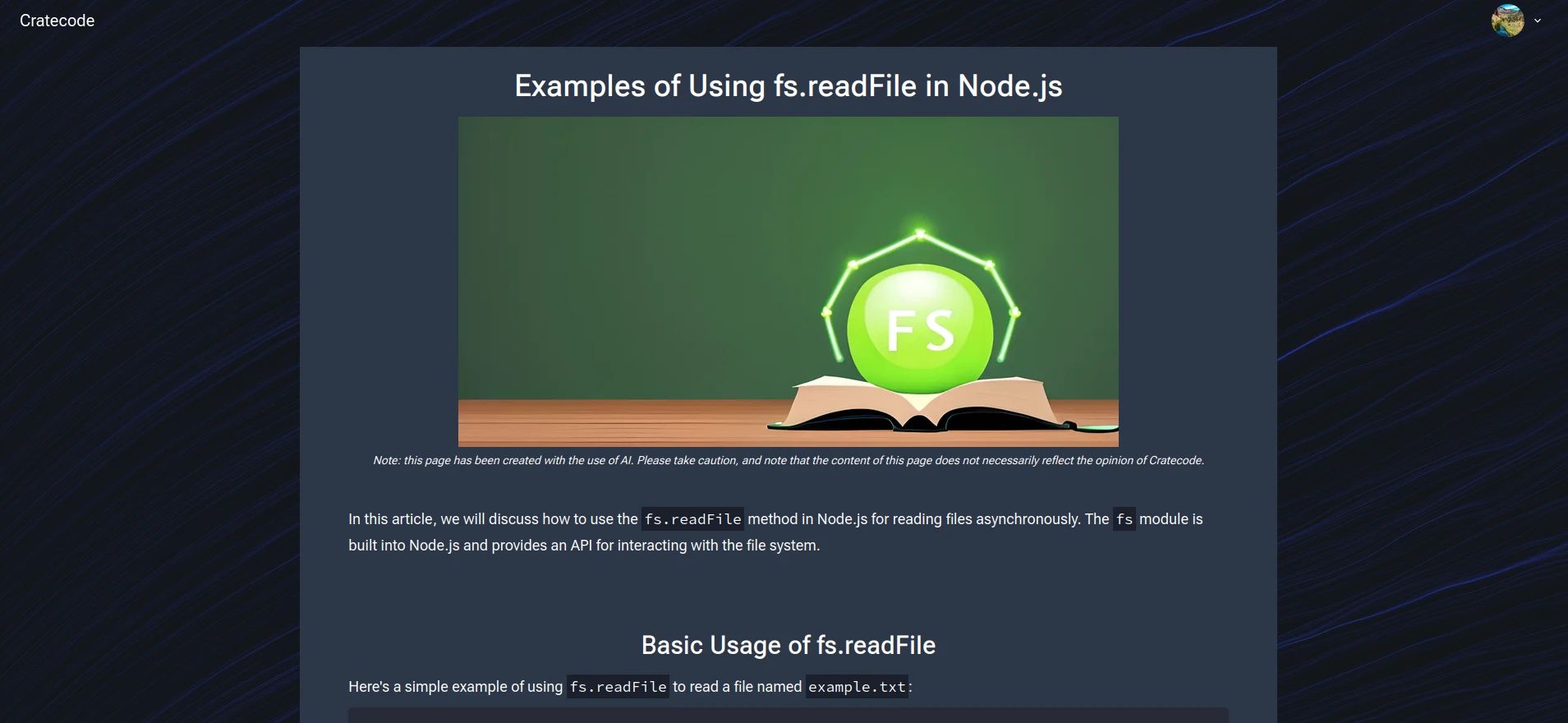

Header Images

One (pretty big) thing I've yet to talk about are the images. Each article gets its own banner image. They help people visually distinguish articles, but are mostly there for SEO. SEO isn't meant to be the focus of this article—so I've kept this section near to the bottom—but the process behind image generation is pretty interesting, so I'm including it.

There are some pictures of the header images above, but here's one to give you a little refresh:

These images are made using Stable Diffusion. More specifically, they're Stable Horde, which is an awesome project to crowd-source AI image generation. Many of the images are generated by directly giving the generator the title and description of the article, but that leads to images that focus on keywords instead of giving a fun metaphor or any deeper meaning. These types of images are still pretty cool though:

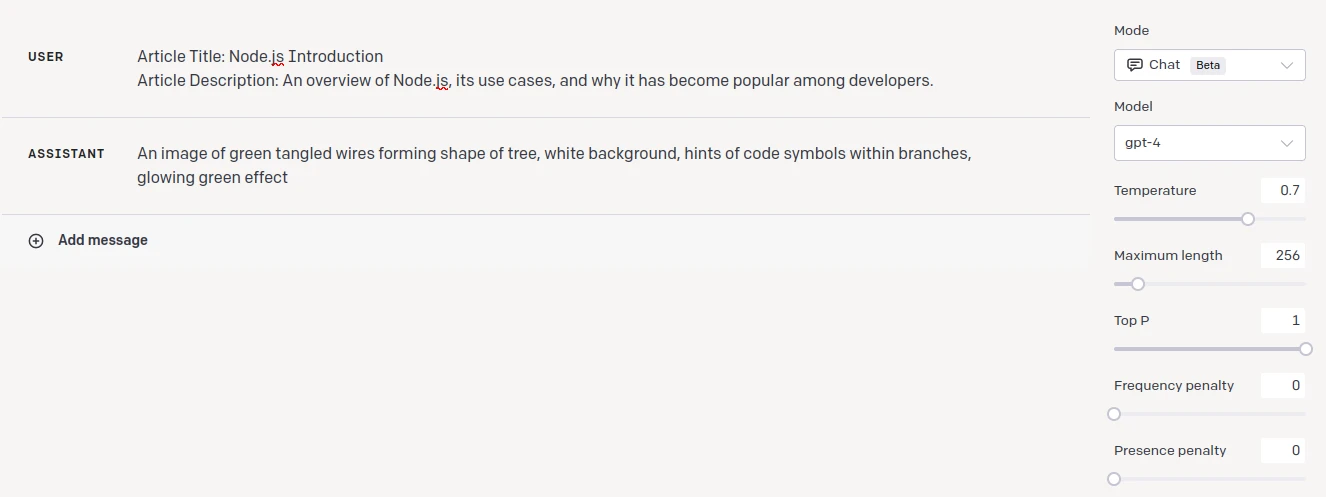

But, most aren't generated like that. Instead, we have a separate stage through GPT-4 that turns an article title and description into an image prompt. GPT-4 is great at this as well, and creates prompts that ascribe some deeper meaning to the image:

Safety and Security

Safety and security is exceedingly important with any untrusted information, especially when it comes from AI. AI content is directly published under our name and likeness, so we need to take the utmost caution when dealing with it. To handle that, we have a number of policies in order to protect ourselves and users viewing our content:

- All AI-content that we release is marked as AI content. We also put warnings in order to steer users away from believing what the AI says as fact.

- All AI-content may only be shown to a maximum of one user, unless its manually approved. This means that only the student requesting help from the AI assistant can see what it says, all AI-generated articles are private by default (they can only be seen by the person who generated them), and AI-images are only shown to administrators until they're approved.

- All AI content is screened through an automatic content filter. For AI text content, we use OpenAI's Moderation Endpoint. AI images pass through two NSFW filters before being saved (and must still be manually approved after that).

AI content is incredible, but it isn't ready for automatic publishing. It could give incorrect (or even harmful) content, or produce outputs that aren't high quality.

Final Thoughts

Hopefully some of this has been interesting to you. My goal with this article is really to share the incredible things possible with AI—and as a marketing ploy to tell you about Cratecode. You're at the end, so that probably means I did a decent enough job. AI is going to change the world—and it's coming in fast. I only hope we see some good out of it all.